Why pre-launch website audits fail without automated test execution

Most website audits don't fail because they miss issues.

They fail because they never validate the fixes.

The audit execution gap

Teams run pre-launch audits. They identify broken links, slow load times, accessibility violations. They fix them in staging. Then they deploy without confirming those fixes survive subsequent code changes.

The problem isn't audit methodology. It's the lack of validation infrastructure that runs tests on every commit. A one-time audit report tells you what's broken today. It doesn't tell you if your fixes will still work tomorrow.

Only 51.8% of websites meet Core Web Vitals performance standards according to Seoprofy's analysis of web performance data. That gap exists because audit identification doesn't translate to deployment prevention.

Manual checklists miss what automated execution catches

Manual website audits check performance once. They generate a snapshot. Teams fix the issues, mark them complete, and move on.

Then a developer adds a new dependency. Load times degrade. A designer refactors a component. Accessibility fixes break. A content update introduces broken links. None of this gets caught because the audit was a one-time event, not a continuous validation process.

Google's research on mobile performance shows 40-53% of users abandon sites that load too slowly. Yet most pre-launch workflows check performance once without ongoing validation across code changes. The abandonment happens because issues ship despite the audit process.

Regressions ship because fixes aren't validated continuously

Broken links reappear after subsequent commits. Core Web Vitals degrade with new dependencies. Accessibility repairs break during refactoring. These aren't new issues. They're regressions of problems already identified and supposedly fixed.

Automated parallel execution catches this. Run validation tests on every push. Confirm fixes survive deployment. Detect regressions before they reach production.

SE Ranking's analysis of search visibility found 94% of pages get zero traffic from Google. That statistic reflects shipped issues that audits theoretically catch but validation workflows fail to prevent. The SEO issues, performance bottlenecks, and broken user paths made it past the audit. No one validated the fixes across the deployment pipeline.

What continuous validation requires

Test infrastructure that runs on every commit: Manual audits generate reports. Automated validation blocks deployments. The difference is whether issues can ship after being identified.

Parallel execution across test types: Run performance tests, accessibility checks, and link validation simultaneously. Catch regressions in any category before merge.

Clear blocking criteria: Define what breaks the build. A broken link shouldn't deploy. A Core Web Vitals regression shouldn't merge. Clear thresholds prevent judgment calls that let issues slip through.

How QA flow closes the execution gap

QA flow runs tests on every commit. The audit tickets system groups issues by type and priority rather than overwhelming teams with scattered findings. Broken links get caught when they're introduced. Performance regressions trigger alerts before deployment. Accessibility violations block merges until they're resolved.

This approach applies test design techniques to website validation. Equivalence partitioning reduces redundant checks. Boundary value analysis finds edge cases in form validation. State transition testing checks multi-step user flows after code changes.

The infrastructure gap closes when validation becomes continuous rather than episodic.

Pre-launch audit tools focus on detection, not validation

Most website audit tools generate reports. They tell you what's broken. They don't run validation tests on every push to confirm fixes survive deployment.

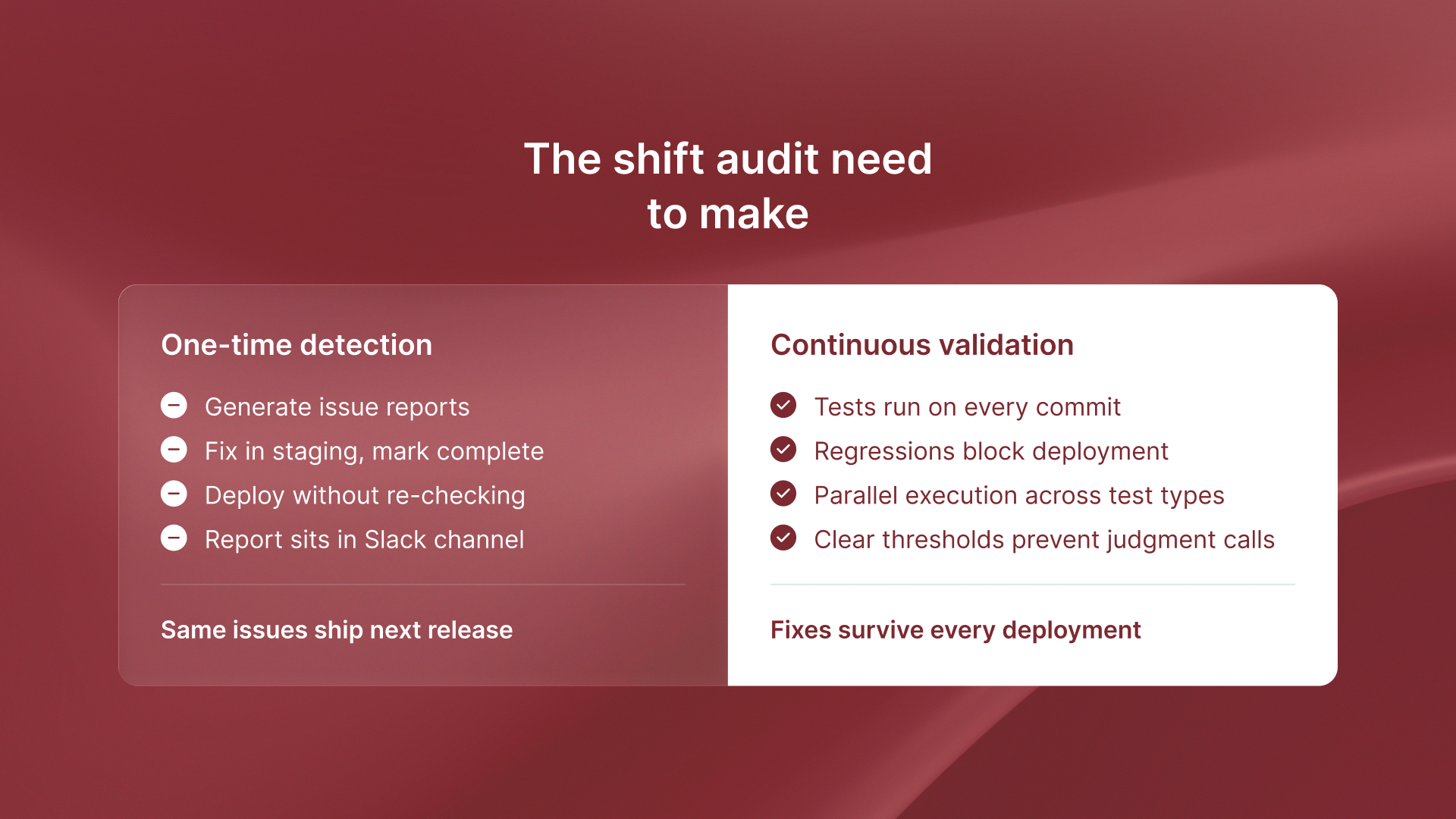

That's the infrastructure gap. Detection without execution. Identification without prevention. Audit reports that sit in Slack channels while the same issues ship in the next release.

The audit process needs to shift from one-time detection to continuous validation. From issue reports to automated test execution. From manual spot-checks to parallel test runs that validate fixes across every code change.

The takeaway

Pre-launch audits aren't about generating issue reports. They're about validating fixes survive deployment.

Automated execution closes the gap.

.svg)

.svg)