Autonomous testing vs automated testing: the architectural difference that matters

%20(6).png)

Most AI-powered testing tools are automated testing with better prompts. True autonomous testing is architecturally different.

Every testing vendor now claims AI capabilities. The term means wildly different things. Some tools use AI to generate Selenium scripts faster. Others use AI to suggest test cases based on code changes. A few use multi-agent systems to create, run, and maintain tests from design specs, without human-written test cases.

For engineering leaders evaluating tools, this distinction matters. One improves efficiency. The other fundamentally changes what's possible. This blog explains the key difference between automated test execution and autonomous test generation. It also explains why Forrester created a new research category to separate them.

The core distinction

Automated testing executes human-written test scripts faster. Autonomous testing generates, executes, and maintains tests without human-defined test cases. The difference lies in where test intent originates.

Forrester shifted their research category from Continuous Automation Testing Platforms to Autonomous Testing Platforms in 2025. They defined autonomous testing as AI agents that write, run, and maintain tests. Automated testing is when humans write tests and machines execute them. This wasn't semantics. It was recognition that the architectures are fundamentally different.

Automated testing tools take human-written test cases and make them run faster, more reliably, across more browsers. Autonomous testing platforms derive test cases from design specs and code commits, removing the human test-writing bottleneck entirely.

Why most AI testing tools aren't actually autonomous

Sixty-one percent of QA teams are using AI-driven testing to automate routine tasks. This is according to Katalon’s 2025 State of Software Quality Report. But only 15% have achieved enterprise-scale implementation. The gap exists because most tools aren't delivering true autonomy.

They're automation with AI assistance. They help humans write Selenium or Cypress scripts faster. They suggest test cases based on code changes. But humans still define what to test. The bottleneck remains.

Tools like QA flow represent a different architecture. They generate test cases autonomously from Figma designs and GitHub commits, testing behavior specifications rather than implementation details. The qaflow.com/audit tool shows this approach for website testing. It finds SEO issues, broken links, and performance bottlenecks. It does this without human-defined test cases.

Where test intent originates

The architectural difference comes down to intent. Automated systems codify intent from human QA engineers. Autonomous systems derive it from design specs and commits.

Intent-based testing from Figma designs tests what should happen, not how it's implemented. This means tests survive refactors. They validate behavior specifications rather than CSS selectors or DOM structure that breaks on code changes.

When you change a button from a div to a button element, automation breaks. Autonomy doesn't. The design spec says "clicking this should navigate to the next page." The implementation details are irrelevant.

The market shift

The global automation testing market may grow from $19.97 billion in 2025 to $51.36 billion by 2031. ResearchAndMarkets reports this. Autonomous testing platforms represent the fastest-growing segment.

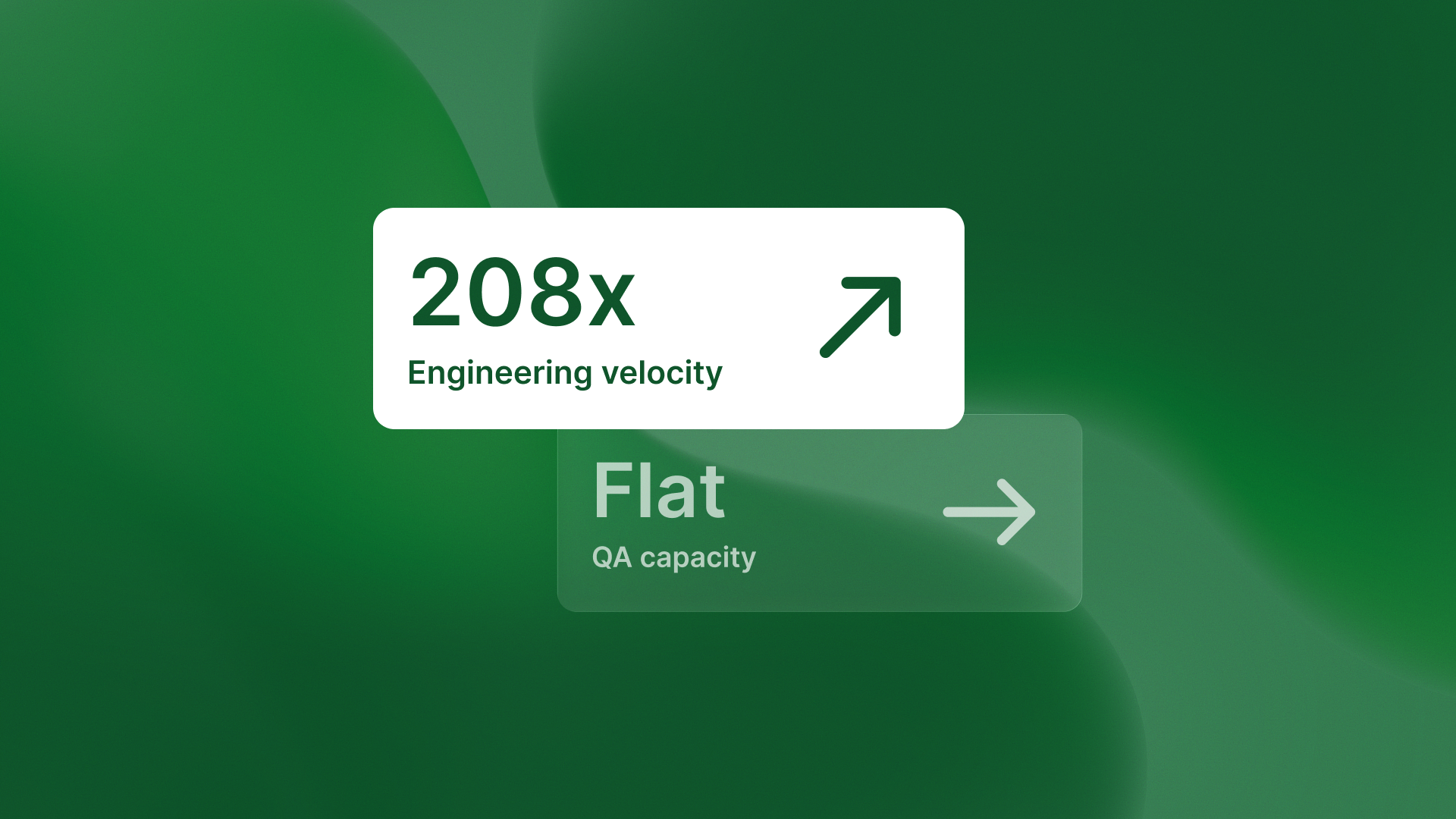

This growth reflects market recognition that test generation, not execution automation, is the real constraint. Teams don't need faster test execution. They need more test coverage without hiring more QA engineers.

%20(11).png)

The evaluation framework

Engineering leaders need a technical framework for evaluating testing tools. Ask these questions:

Does the tool generate test cases autonomously from design artifacts, or does it help humans write test scripts faster? Does it manage multi-agent systems for test creation, running, and reporting? Or does it improve single-purpose automation frameworks?

This distinction determines whether a tool scales QA capability or just improves QA team efficiency.

The takeaway

Automated testing makes human-written tests run faster and more reliably. Autonomous testing removes the human test-writing bottleneck entirely by generating tests from design intent. When assessing AI-powered testing tools, ask where test intent originates. If humans still define test cases and the AI helps write scripts, that's automation. If agents derive test cases from Figma designs and GitHub commits, that's autonomy.

The architectural difference determines whether the tool scales QA capability or just improves QA team efficiency. Autonomous testing platforms are growing fastest because they solve the bottleneck that matters: test generation, not execution speed.

.svg)

.svg)

.png)

.png)