Test automation maintenance consumes 30-50% of QA budgets

Test automation breaks. Constantly.

The World Quality Report 2022-2023 found maintenance costs consume up to 50% of the overall test automation budget. Half your QA spending goes to fixing broken tests rather than expanding coverage.

Most engineering leaders discover test automation ROI collapses after 6-12 months. Teams maintaining large test suites spend more hours on broken tests than new development. The culprit isn't poor test design. It's the fundamental architecture of traditional frameworks.

Traditional frameworks test implementation details

Selenium, Cypress, and Playwright validate CSS selectors. DOM structure. Element IDs. When developers refactor UI code, tests break.

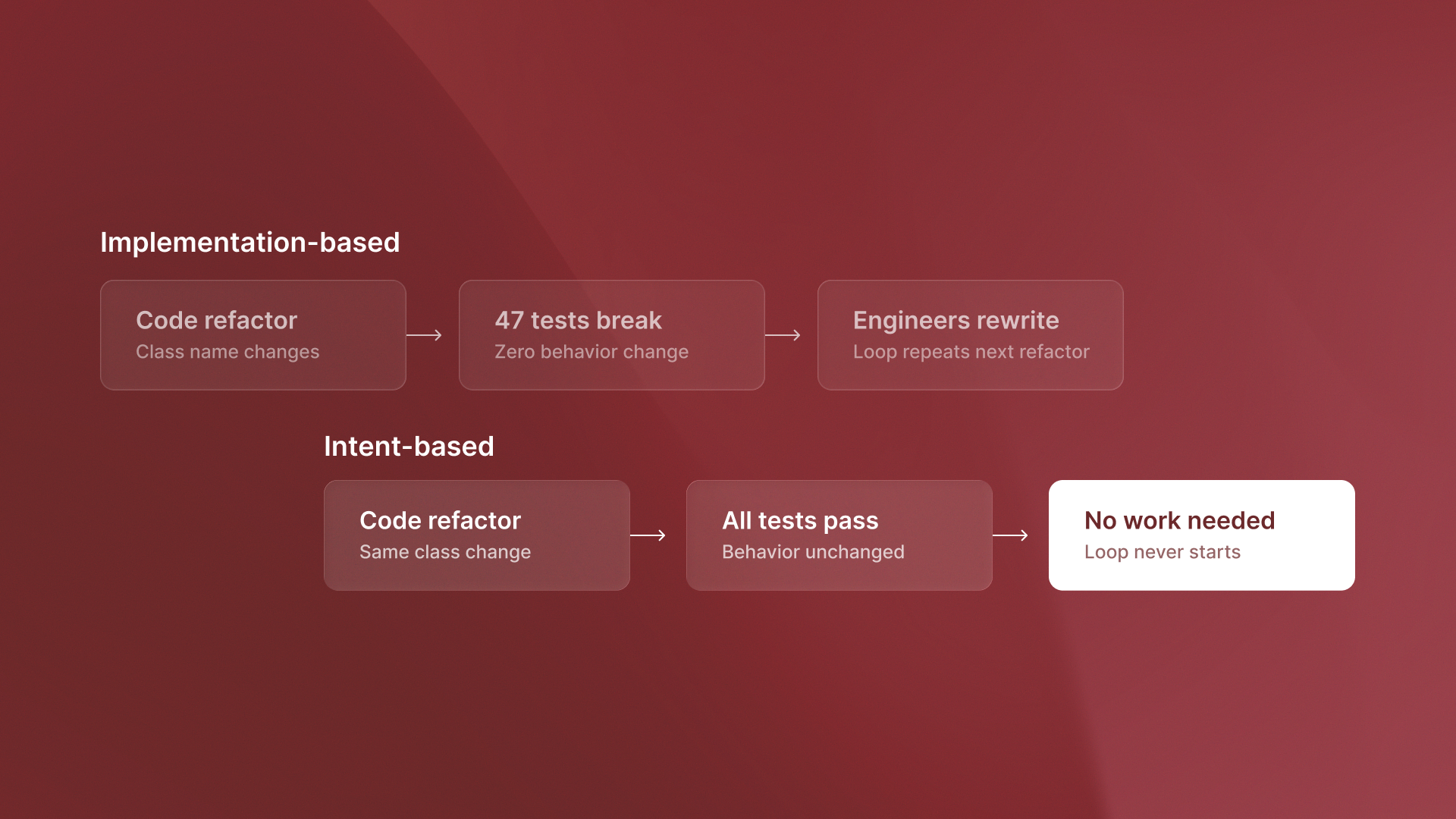

A developer refactors a checkout form to improve accessibility. The form works identically from a user perspective. But 47 tests break because button class names changed from checkout-btn-primary to btn-checkout-main.

The tests didn't detect a bug. They detected a cosmetic code change with zero impact on user experience. Yet someone must spend hours updating selectors across test files.

Testing implementation details means tests break when implementation changes, even when behavior stays constant.

Rainforest QA's 2025 survey found that 55% of teams use Selenium, Cypress, and Playwright. These teams spend more than 20 hours each week.They spend that time creating and maintaining automated tests. At scale, the problem accelerates.

Intel Market Research 2025 found that teams with over 1,000 tests spend 60% of their time on maintenance. They spend less time on new test development.

The maintenance burden grows faster than test coverage. More tests mean more breakage points. Eventually teams hit a ceiling where adding new tests costs more than the value they provide.

Intent-based testing validates behavior from design specs

Intent-based testing takes a different approach. It checks what the code should do based on design specs. It does not check what the code does now based on CSS selectors.

When tests validate user can complete checkout flow from Figma specs rather than button with class checkout-btn-primary exists, code refactors don't break tests. The behavior being tested remains constant even as implementation changes.

Tools like QA flow generate tests directly from Figma designs and user stories. The test understands the intended behavior: user clicks payment button, enters card details, sees confirmation screen. How that behavior is implemented in code is irrelevant.

Developers can refactor the entire checkout component. Change class names, restructure the DOM, move elements around. As long as the user can still complete checkout, the test passes.

No maintenance required.

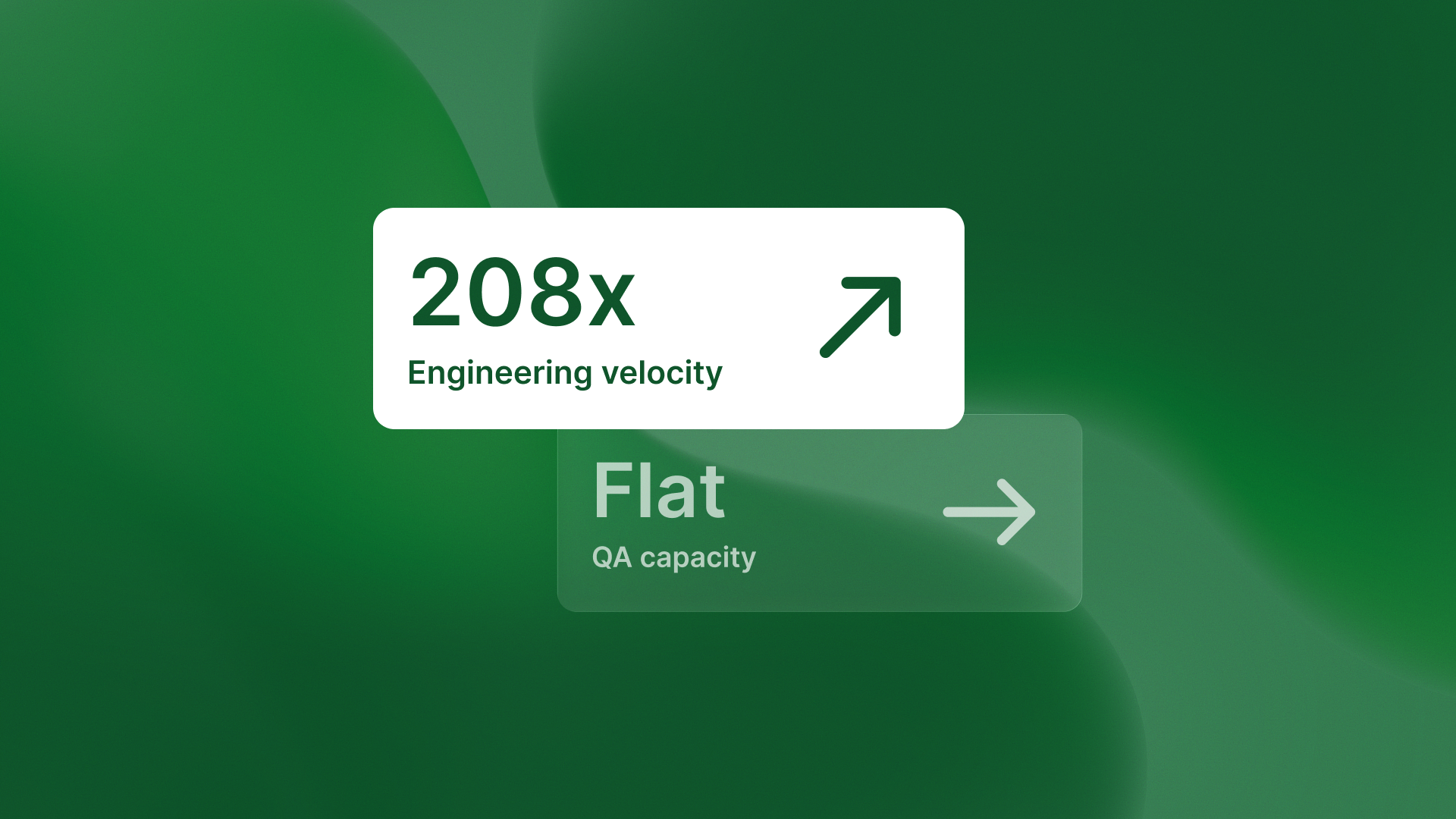

Teams using QA flow regularly move from biweekly releases to weekly, and from weekly to twice-weekly. The gain isn't just speed. It's stability and confidence.

The architectural difference that eliminates maintenance

Test automation requires humans to define test cases based on current implementation. When implementation changes, humans must rewrite tests.

Autonomous testing generates tests from design intent. Design intent remains stable across refactors. A checkout flow defined in Figma doesn't change when a developer renames CSS classes. Tests generated from that Figma spec stay valid through every code refactor that preserves the original behavior.

QA flow handles repetition so teams focus on insight. QA budgets go toward expanding coverage and catching real bugs, not repairing tests broken by cosmetic code changes.

Test automation that validates implementation details creates maintenance debt that scales exponentially. Intent-based testing that validates behavior from design specs eliminates that debt entirely.

.svg)

.svg)

.png)

.png)