The hidden cost of test automation maintenance

.png)

Up to 50% of your test automation budget doesn't go to testing. It goes to maintenance. According to the World Quality Report 2022-2023, organizations dedicate 30% to 50% of their testing resources to maintaining and updating test scripts. For every $100,000 spent on test automation, $30,000 to $50,000 goes to keeping existing tests running.

That money does not expand coverage or help find new bugs. This isn't a tooling problem. It's an architecture problem.

The implementation tax

Traditional test automation tools like Selenium, Cypress, and Playwright validate how code works, not what it should do. Tests use brittle selectors - CSS classes, XPath expressions, data attributes - that break whenever developers refactor code. A button moving from a div to a section tag breaks tests even though user behavior is identical. A class name changing from "btn-primary" to "button-main" triggers test failures despite zero functional change.

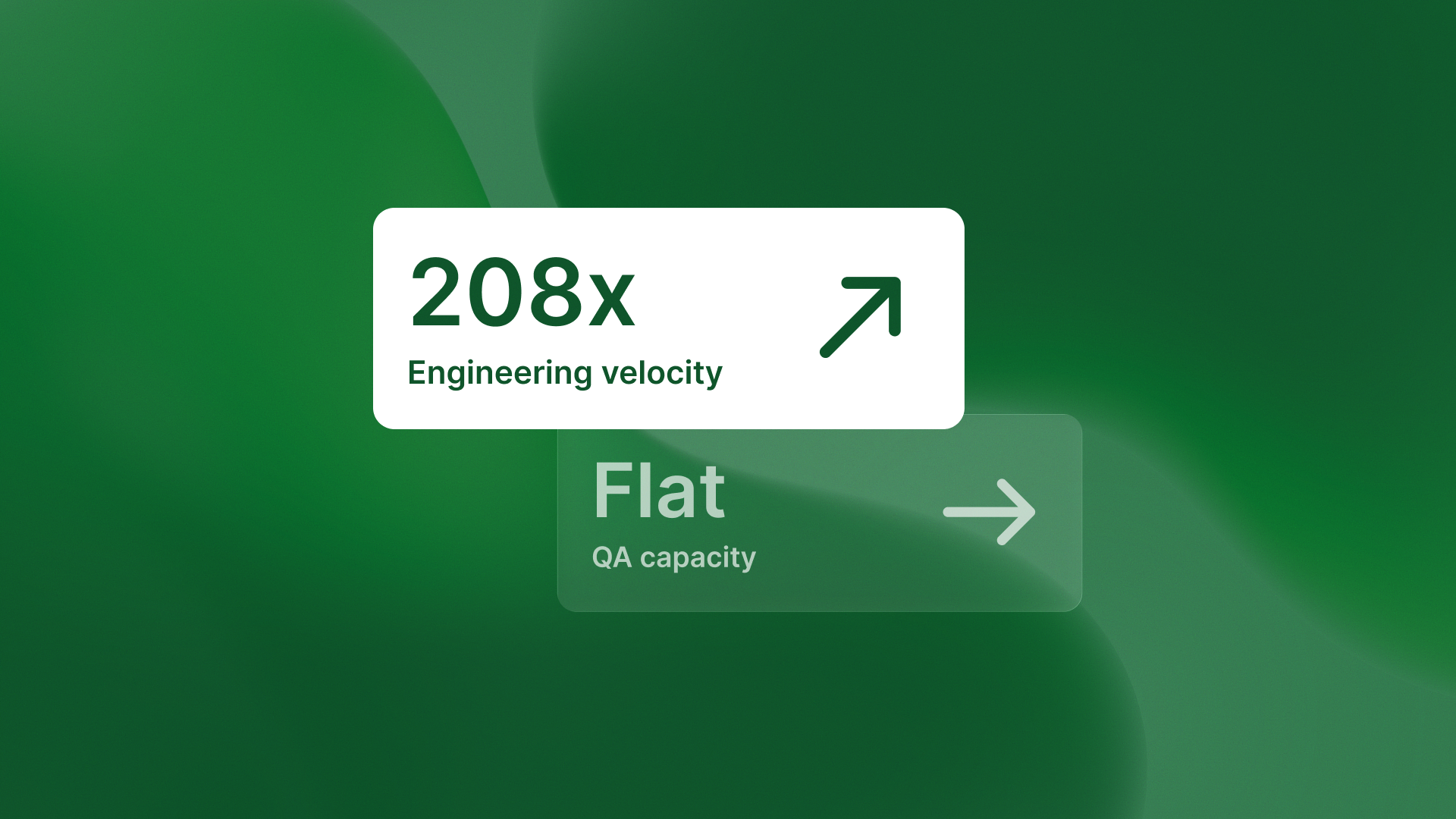

This creates a cascading maintenance burden. Every code refactor requires proportional test updates. What starts as two hours per week of test maintenance can become 20 hours per week. This can happen as codebases grow from 100K to 1M lines of code. Teams end up dedicating entire engineers just to keeping automation running instead of building new features.

Why intent-based testing eliminates maintenance

Intent-based autonomous testing validates behavior from design specifications, not implementation details. By reading Figma designs and GitHub commit messages, systems like QA flow can infer intended behavior. They create tests to confirm that the checkout button actually completes a purchase. They do not just check that an element with the class.

When developers refactor code, these tests remain valid because design intent hasn't changed. The architectural difference is fundamental: implementation-based tests break on every refactor because they're testing the wrong thing. Intent-based tests stay valid when the implementation changes.They check what the system should do, not how it does it today.

The maintenance tax scales with growth

As codebases grow, the maintenance burden compounds. Test suites grow proportionally with features, and maintenance time scales linearly. According to Test Automation Software Statistics 2025, effective strategies can cut test maintenance costs by up to 30%. However, most organizations see those costs rise instead.

The result is predictable: test automation that was supposed to accelerate velocity becomes a tax on development speed. Engineers spend more time updating tests than writing new ones. Quality initiatives that promised efficiency deliver technical debt instead.

.png)

The architectural solution

Autonomous testing eliminates the maintenance tax by regenerating tests from source of truth rather than updating brittle selectors. When design specs in Figma are the source of truth, test regeneration happens automatically when designs change intentionally. Unintentional code changes - refactors, technical debt cleanup, framework migrations - don't break tests because behavior validation remains constant.

This architectural difference converts ongoing maintenance cost into zero-cost test regeneration. The qaflow.com/audit tool demonstrates this approach by validating behavior from design intent rather than implementation details.

The takeaway

The maintenance tax isn't inevitable. It's the predictable result of testing implementation instead of intent. Companies that continue scaling traditional automation are choosing to scale technical debt. Test automation that requires constant human maintenance isn't autonomous. It's automated busywork.

.svg)

.svg)

.png)

.png)