The QA bottleneck that kills series b scaling

Manual QA doesn't scale linearly. Most engineering leaders don't realize this until it's too late.

The non-linear trap

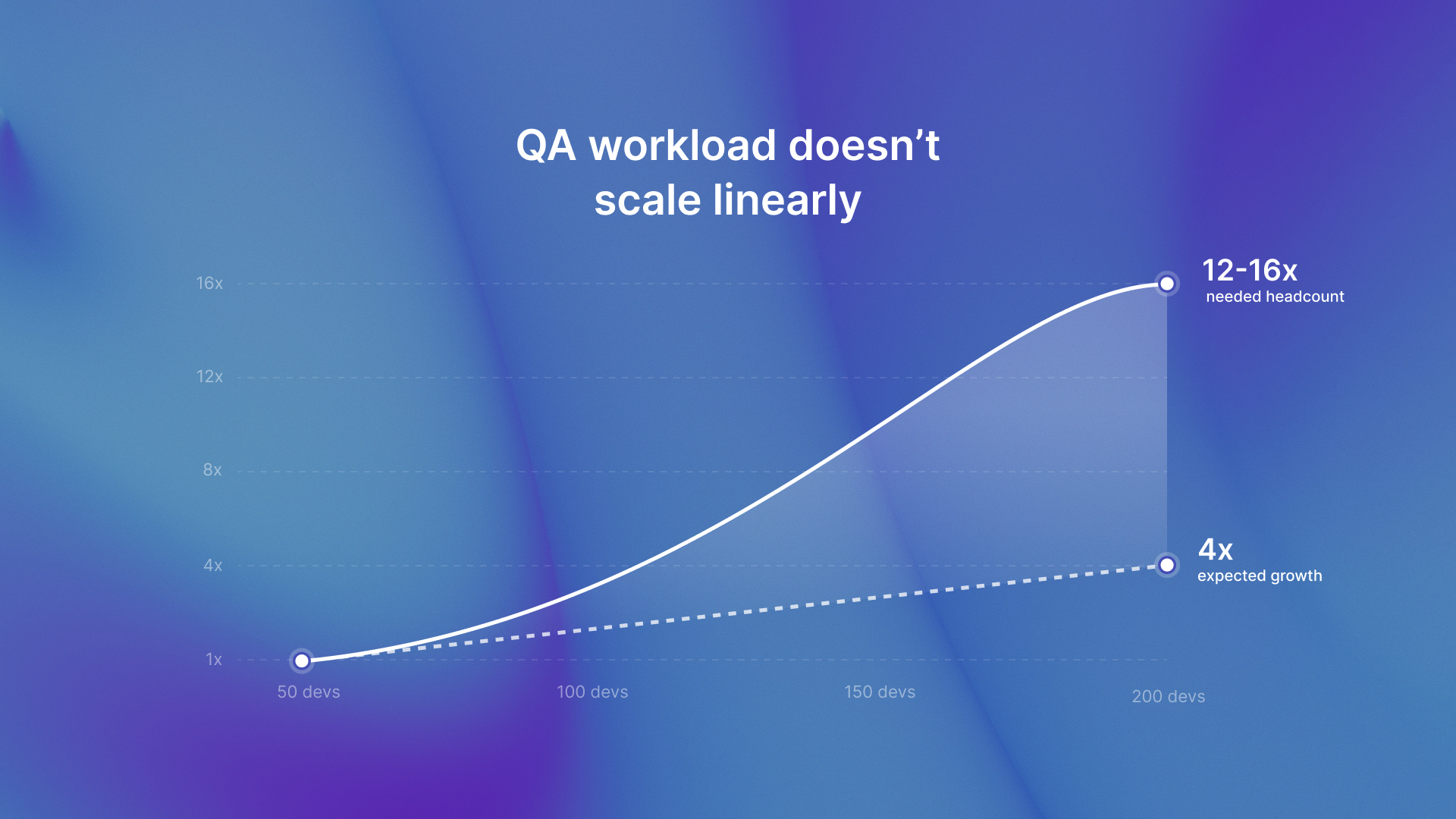

You're a VP Eng at a Series B startup. Last year you had 50 developers. This year you'll hit 200. You hired QA engineers proportionally, maybe 1 QA per 10 devs. But your release cycle just went from 3 days to 2 weeks.

What happened?

QA workload doesn't double when you double headcount. It triples or quadruples due to integration complexity, regression scope expansion, and cross-team dependencies. The math breaks.

72% of teams have integrated QA automation into CI/CD pipelines, yet regression testing still consumes 40-50% of QA team time on average. This proves automation alone doesn't solve the bottleneck.

Execution speed isn't the problem. Test generation and maintenance burden is.

Why proportional hiring fails

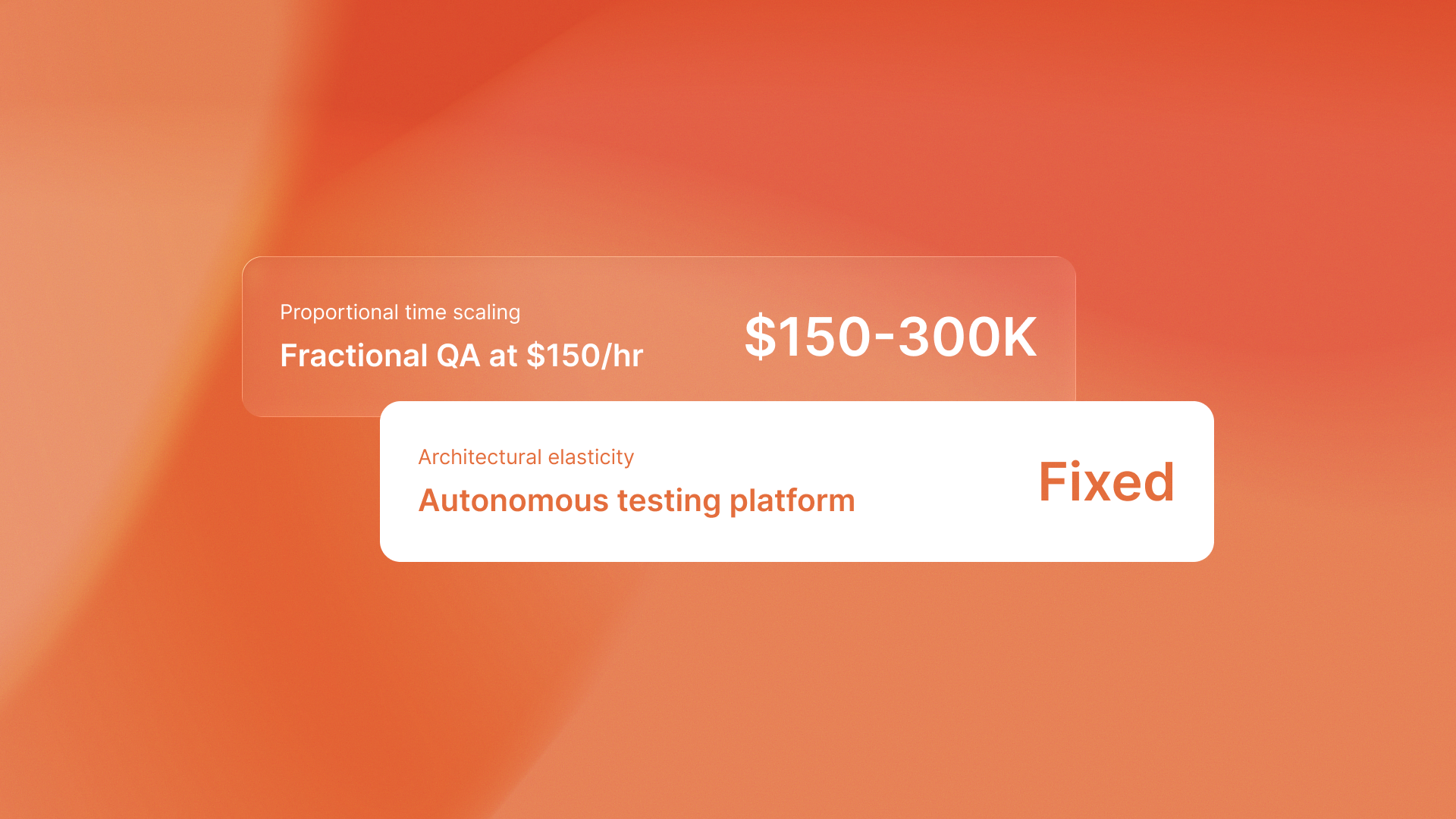

If you scale from 50 to 200 devs (4x growth), you'd need 4x QA headcount just to maintain the same cycle time. But QA hiring is slower than dev hiring and salary competition is fierce.

AI automation reduces testing time by 50-70%, Most teams still write test cases by hand. This happens even when they use Selenium or Cypress.

The bottleneck isn't test execution. It's test generation from commit intent and design specs.

Most AI testing tools just generate Selenium scripts. People still must write test cases and fix fragile selectors. Autonomous testing generates tests from Figma specs and commit messages, eliminating the test case writing bottleneck entirely.

Where QA capacity should go

Redeploying QA capacity from regression to exploratory work catches 3x more critical bugs per hour. Exploratory testing focuses on edge cases, UX validation, and domain-specific scenarios that autonomous systems can't predict.

Regression testing consumes 40-50% of QA team time. If autonomous testing handles regression, QA engineers reclaim that 40-50% for high-judgment work.

The value isn't just cycle time reduction. It's redirecting scarce QA talent to work only humans can do.

QA flow generates tests from Figma designs and commit messages, running continuous regression checks without human test case writing. This redeployment model doesn't replace QA engineers. It makes them 3x more effective by eliminating low-value work.

For teams looking to strengthen their test design foundation, test design techniques provide a framework for deciding what to test and why it matters.

The real deployment bottleneck

The deployment bottleneck isn't QA execution speed. Manual regression testing forces a step-by-step workflow. QA waits for developers to finish. Then developers wait for QA feedback. This creates two-week ping-pong cycles.

Autonomous testing runs parallel to development. Tests generate from design specs before code is written. Regression suites run on every commit. Feedback loops compress from days to minutes.

The qaflow.com/audit This approach shows this principle at the product level. It groups issues by type and key pages. This helps teams avoid low-signal noise.

The takeaway

Scaling QA isn't about hiring more people. It's about redeploying the people you have from repetitive regression work to high-judgment exploratory testing.

Autonomous testing doesn't replace QA engineers. It makes them 3x more effective.

.svg)

.svg)

.png)