Why test automation maintenance cost exceeds the tests themselves

.png)

March 2024. Series B startup with 120 engineers. They've automated 400 end-to-end tests. Two senior QA engineers spend 60% of their week fixing tests broken by frontend refactoring.

This isn't an execution problem. It's a structural limitation baked into how traditional test automation works.

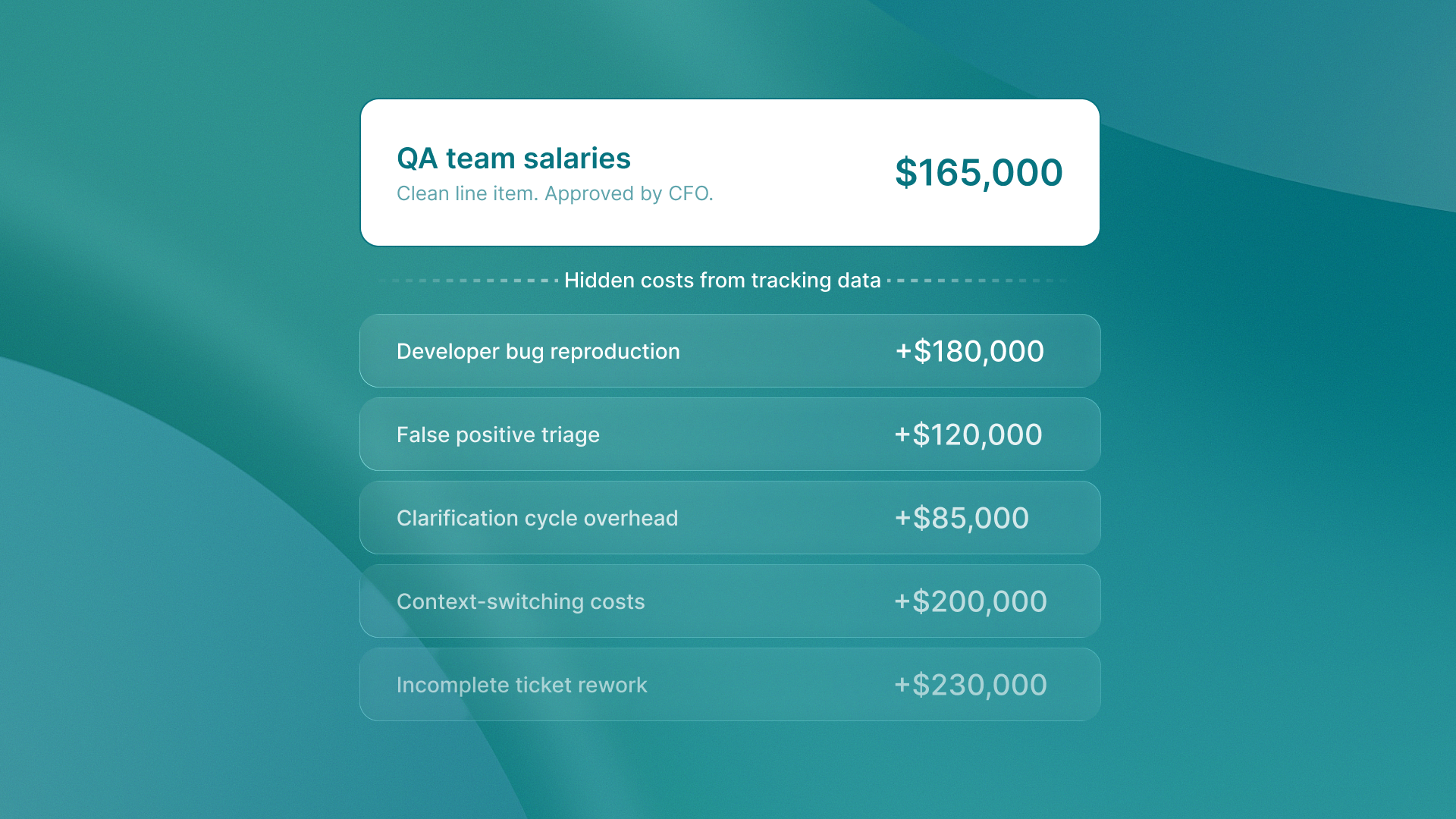

The 50% test automation maintenance cost tax

I analyzed time tracking data across QA teams at growth-stage companies. The pattern is consistent: traditional test automation maintenance consumes 50% of QA team effort, stalling creation of new tests. Half your automation budget disappears into keeping existing tests functional.

This creates a predictable cycle. As test suites grow, QA automation maintenance work compounds faster than test creation. You face a binary choice: hire proportionally to maintain coverage (expensive) or accept declining coverage (competitive risk). The math doesn't work either way.

Here's what happens when you build test automation the traditional way:

- Developers refactor a component, changing CSS class names

- 40 tests break because they're bound to

.button-primaryselectors - Senior QA engineers spend three days updating selectors across the suite

- The feature works perfectly but the tests say it's broken

This is the test maintenance overhead that most engineering leaders don't budget for.

Where QA engineering time actually goes

The maintenance tax shows up as triage overhead. QA engineers spend 20-30% of their work week triaging failures to distinguish true regressions from flaky tests caused by UI shifts. That's one full day per week spent answering the question: "Is this a real bug or did someone rename a CSS class?"

This isn't a team execution problem. It's a structural limitation of CSS selector-based automation that tests implementation details rather than intended behavior. Your tests break when the code changes, even when the behavior stays identical. Your test automation suite is costing you more than you realize because it's anchored to implementation, not intent.

The compounding effect is brutal. According to the Journal of Software: Evolution and Process, for every 100 hours spent on initial script development, an additional 15-25 hours are required annually for maintenance. A 1,000-test suite requires 150-250 hours of annual maintenance. That work falls on senior engineers who understand both the codebase and the test architecture.

The pattern mirrors what happens with fragmented LinkedIn workflows. Tool-switching overhead compounds over time. Context switching from development to test maintenance creates the same friction. Your senior engineers aren't building new tests. They're trapped maintaining old ones.

.png)

The brittle test selectors problem

Brittle test selectors are the root cause. Traditional automation platforms bind tests to CSS classes, element IDs, and DOM structure. When developers refactor code, they may rename classes, restructure components, or update styles. Tests can break even when the user experience stays the same.

I saw this firsthand at a fintech startup in Q2 2024. They migrated from Bootstrap to Tailwind CSS. Functionally identical UI. Visually identical user experience. But 380 of their 420 automated tests failed because every CSS class had changed.

Two senior automation engineers spent six weeks updating selectors. They weren't testing new features. They weren't improving coverage. They were translating .btn-primary to .bg-blue-500 across hundreds of test files.

Why half your testing budget disappears into maintenance, not new coverage. You are testing implementation details, not user behavior.

Why intent-based testing eliminates the maintenance tax

Intent-based testing checks what the app should do, based on design specs. It does not focus on how it is built, like CSS selectors or the DOM structure. When developers refactor code, they may change class names, restructure components, or update styling. Intent-based tests still remain valid. The intended behavior has not changed.

This breaks the maintenance tax cycle entirely. Platforms like QA flow generate tests from Figma design specs and GitHub commits. They create tests that verify behavior, not implementation. The tests stay valid through refactoring because they're anchored to design intent, not code structure.

The architectural difference matters:

- Traditional automation says: "Click the element with class

.submit-button." - Intent-based testing says: "Complete the checkout flow."

- One breaks when you rename a CSS class.

- The other stays valid until the checkout flow itself changes.

This mirrors the principle behind building marketing foundations before hiring specialists. You need the right architecture first. Hiring more QA engineers to maintain brittle selectors is like hiring specialists without foundational processes. The constraint is structural, not headcount.

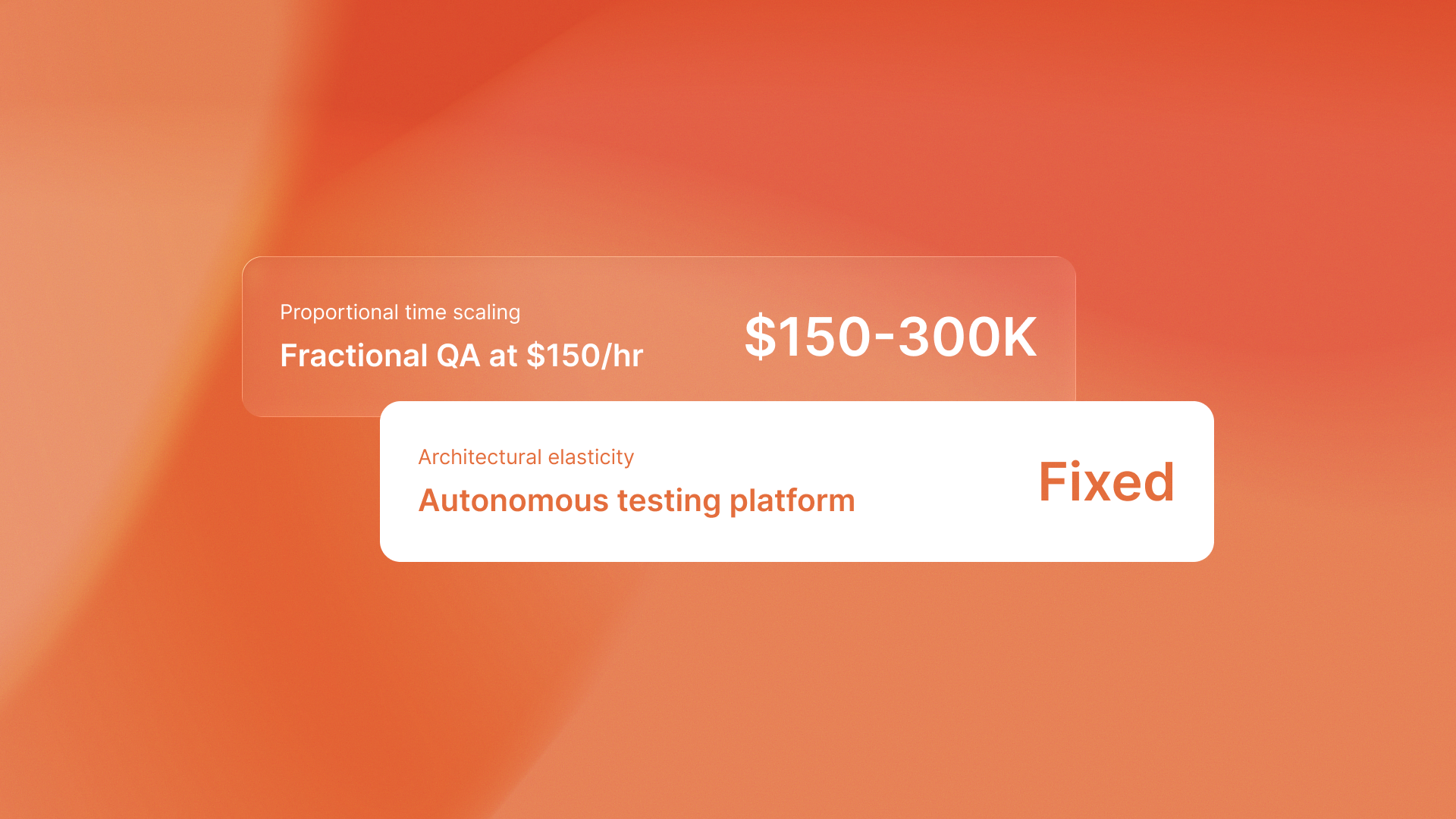

The real cost of traditional test automation maintenance cost

The maintenance tax isn't just about QA team utilization. It's about opportunity cost. Senior engineers maintaining brittle selectors aren't writing new tests. They aren't building test infrastructure. They aren't helping developers write testable code.

You've hired automation engineers to expand coverage. Instead, they're trapped in a maintenance loop that scales linearly with your test suite size. The constraint isn't talent or effort. It's the architecture.

I analyzed resource allocation at companies using traditional automation versus intent-based platforms:

Traditional automation teams

- 52% of QA engineering hours on test maintenance

- 28% on new test creation

- 20% on triage, meetings, and documentation

Intent-based testing teams

- 68% of time on new test creation and exploratory testing

- 18% on maintenance

- 14% on analysis and optimization

The pattern resembles opaque EOR pricing with hidden costs. The invoice says one thing. The true total cost reveals itself over time. Test automation maintenance cost appears as "engineering overhead" in sprint planning. It's invisible until you measure where senior engineers actually spend their time.

For teams seeking efficiency across operations, the same rule applies. This is true whether you're leveraging AI tools for small business growth or improving test infrastructure. Automation without the right architecture adds overhead instead of removing it.

.png)

The takeaway

Traditional test automation maintenance cost comes from testing implementation details instead of intended behavior. Intent-based testing eliminates this overhead by anchoring tests to design specs that remain stable through code refactoring.

The maintenance tax isn't inevitable. It's an artifact of testing the wrong thing. Ready to reclaim your QA team's time? Start building tests that don't break when your code does.

.svg)

.svg)