Why 94% of your pages get zero traffic (and how broken link checkers prevent it)

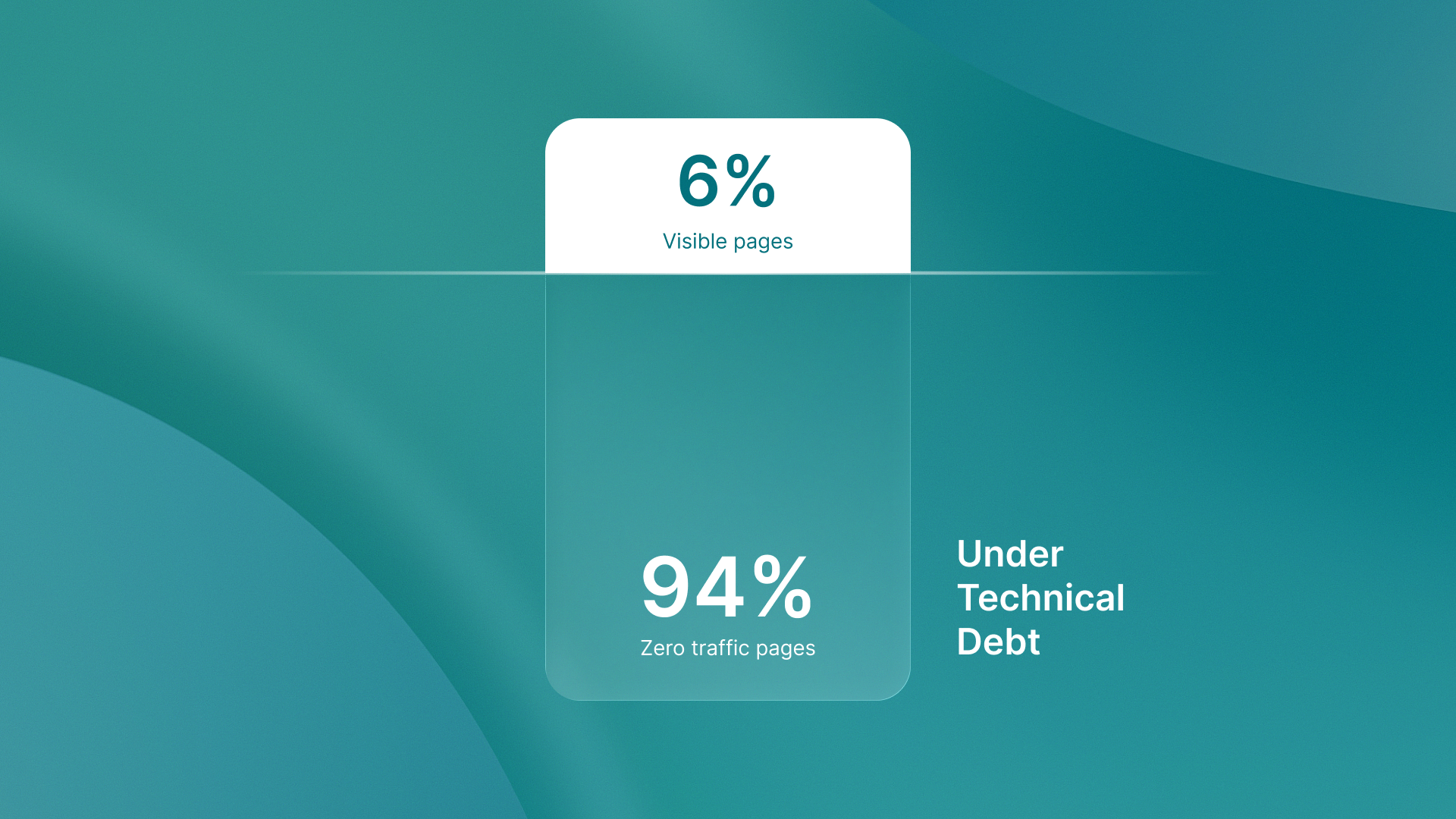

Most websites are invisible.

94% of pages get zero traffic from Google. Not low traffic. Zero.

The cause isn't content quality. It's accumulated technical debt that manual processes cannot prevent at scale.

The compounding problem manual audits miss

Broken links don't appear overnight. They accumulate gradually. One per deploy. One per content update. One per third-party integration change.

Over months, that's hundreds of invisible failures.

Manual audits catch yesterday's problems. They run quarterly, maybe monthly. By the time someone discovers broken links, those pages have already tanked in rankings. The damage compounds while teams wait for the next audit cycle.

Search engines don't wait. Every broken link signals lower quality. Every 404 error tells Google this page isn't maintained. Position 1 gets 10x more clicks than position 10. Small technical errors compound into zero-visibility catastrophes.

Geographic blind spots that tank international rankings

Here's what manual audits fundamentally cannot detect: geolocation-specific failures.

Websites serve different content to different regions. Different URLs. Different redirects. Different metadata. A page that works perfectly from San Francisco might return 404s in Singapore. A redirect chain that's clean in the US might break in Europe.

Manual testing from one location misses these failures entirely.

Tools like QA flow's audit tool solve this by testing from multiple geographic locations simultaneously. Automated broken link checkers catch region-specific errors that single-location manual audits cannot structurally detect. The scale problem alone makes comprehensive manual coverage impossible for sites with international reach.

Zero-click search makes errors more expensive

60% of searches in 2026 are zero-click due to optimized snippets and AI Overviews. Search behavior has shifted. Users get answers directly in search results without clicking through.

This makes structured data and schema markup critical. Missing structured data means losing visibility even when content ranks. Broken schema markup means AI Overviews skip your content entirely. These errors are invisible to manual reviewers but fatal to search performance.

The architecture of prevention

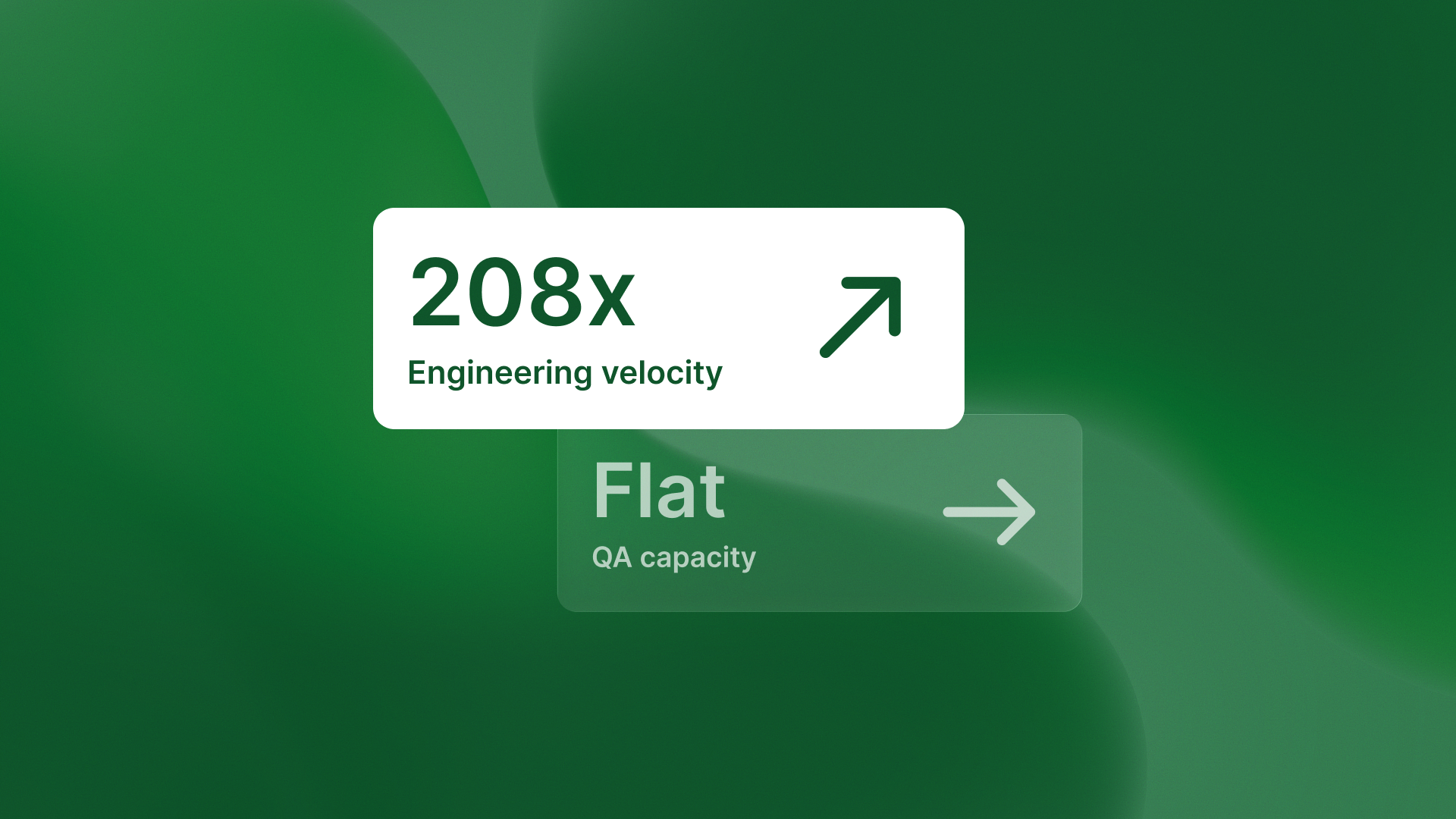

Instant automated detection changes the equation. Instead of quarterly audits finding accumulated damage, automated website testing catches errors on every push before they compound.

The workflow: Deploy triggers automated scan. Scan runs from multiple geographic locations. Broken links, SEO errors, and schema failures get flagged immediately. Teams fix issues before they accumulate into ranking losses.

QA flow treats quality detection as continuous, not periodic. Every deploy gets tested. Every region gets validated. The system prevents accumulation rather than discovering damage months later.

| Manual Audits | Automated Detection |

| --- | --- |

| Quarterly or monthly cycles | Every deployment |

| Tests one location | Validates multiple regions |

| Finds yesterday's problems | Prevents tomorrow's |

| Hundreds of accumulated errors | No error buildup |

The takeaway

Quality isn't about fixing more issues after launch. It's about catching them before they compound into ranking losses.

Manual audits find yesterday's problems. Automated detection prevents tomorrow's.

.svg)

.svg)

.png)

.png)