Why automated testing misses geolocation bugs and edge cases

Automated testing catches the bugs you expect. User feedback catches the bugs you didn't.

The coverage illusion

Most engineering teams treat test coverage as a quality metric. Hit 80% coverage and you're good to ship.

The problem: automated tests run in sanitized environments with predictable inputs. They validate what you built against your specifications. They don't validate your specifications against reality.

According to Kualitee, AI automation reduces testing time by 50-70%. That's real efficiency. But speed doesn't equal scope.

Automated tests can't replicate the combination of user location, device quirks, network instability, and unexpected interaction sequences that expose edge cases in production. The gap isn't test quality. It's test scope.

Where real bugs hide

Mobile devices account for 62.7% of global web traffic. 76% of mobile users who search for something nearby visit a related business within a day.

Geolocation-specific bugs have same-day revenue impact.

Automated testing runs in controlled CI/CD environments. Stable networks. Standard device configurations. Predictable browser versions.

What automated tests can't catch

- Checkout flows that fail only for EU users hitting GDPR consent conflicts

- Navigation menus that break on specific Android versions with non-standard screen ratio

- Payment processors that region-lock based on IP geolocation

- Content rendering issues triggered by locale-specific character sets

These bugs don't surface until real users with real locations on real devices hit production.

The feedback-to-test-case loop

User-reported bugs represent interaction patterns your scripts never anticipated. Each production bug is a blind spot in your test design.

The question isn't whether to collect feedback. It's whether feedback feeds back into testing.

How structured feedback loops work

Users report bugs with context: device, location, browser, reproduction steps. Reports get parsed into structured test cases with environment metadata.

Test cases get added to the automated suite as regression tests. Each production bug becomes a permanent validation checkpoint.

This creates continuous improvement. Your test suite evolves with actual usage patterns, not hypothetical scenarios.

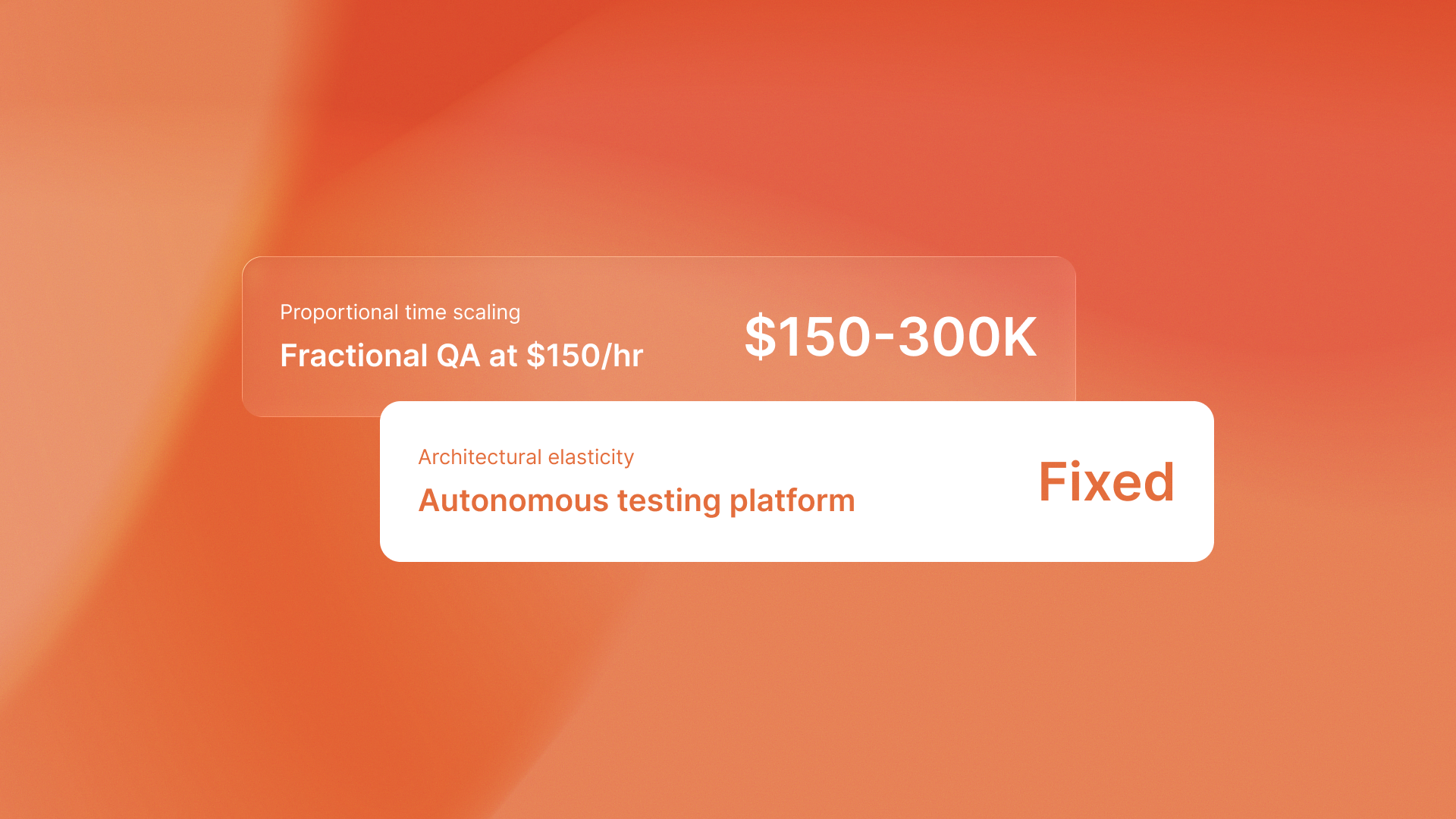

QA flow converts user feedback into executable test cases, closing the loop between production bugs and automated coverage. The platform parses structured audit tickets with device metadata, geolocation context, and reproduction steps into regression tests.

Geographic and device-specific bugs require location-aware testing infrastructure to validate. Standard automated testing can't simulate EU regulatory flows or region-locked payment providers. Feedback-driven test generation can.

It uses real-world failure data to build geolocation-aware test scenarios.

The takeaway

Automated testing validates implementation against specifications. User feedback validates specifications against reality.

The companies that win build website feedback loops where each production bug strengthens the test suite. Not the ones chasing 100% coverage in sanitized environments.

Quality isn't about preventing every bug. It's about learning from the ones that escape.

.svg)

.svg)

.png)