How GitHub test generation solves the QA scaling bottleneck

March 2024. Series B startup. 200 engineers, 8 QA. The QA team is drowning in regression test maintenance while critical bugs slip into production.

This isn't an execution problem. It's a structural limitation baked into how traditional test automation works.

The QA bottleneck emerges predictably at scale

The software testing market is projected to grow from $55.8B in 2024 to $112.5B by 2034. This growth is driven by a basic constraint.Test coverage can’t keep pace with engineering speed (ThinkSys QA Trends Report 2026). The automation testing segment alone is growing from $28.1B in 2023 to $55.2B by 2028 at 14.5% CAGR.

Here's what happens when you scale from 50 to 500 engineers:

- Your feature velocity doubles every 12 months

- Your test suite grows linearly with features

- Your QA team can't write test cases fast enough to maintain coverage

- QA cycle time stretches from 3 days to 2 weeks

You face a binary choice: hire QA proportionally or accept slower releases.

The math doesn't work either way. Proportional hiring burns runway. Slower releases kill competitive velocity. At Islands, I’ve seen this pattern across 12+ portfolio companies. The QA scaling trap hits when Series B startups can least afford a 3-6 month hiring cycle bottleneck.

Traditional automation shifts the bottleneck but doesn't eliminate it

Most teams respond by investing in test automation frameworks. They adopt Selenium, Cypress, or Playwright. They hire automation engineers. The test execution gets faster.

But the bottleneck doesn't disappear. It just moves.

77.7% of organizations have adopted AI-first quality engineering. 74.6% of teams use 2+ automation frameworks (ThinkSys QA Trends 2026). Yet QA scaling problems persist.

Why? Traditional automation still requires humans to define test cases. The constraint isn't test execution speed. It's the human labor required to write test definitions in the first place.

I analyzed time tracking data across Islands portfolio companies in Q4 2024. Teams spent 60-70% of QA engineering time writing and maintaining test cases. Only 30-40% went to analyzing results. The automation accelerated execution. The bottleneck remained in test case creation.

GitHub test generation eliminates the definition bottleneck

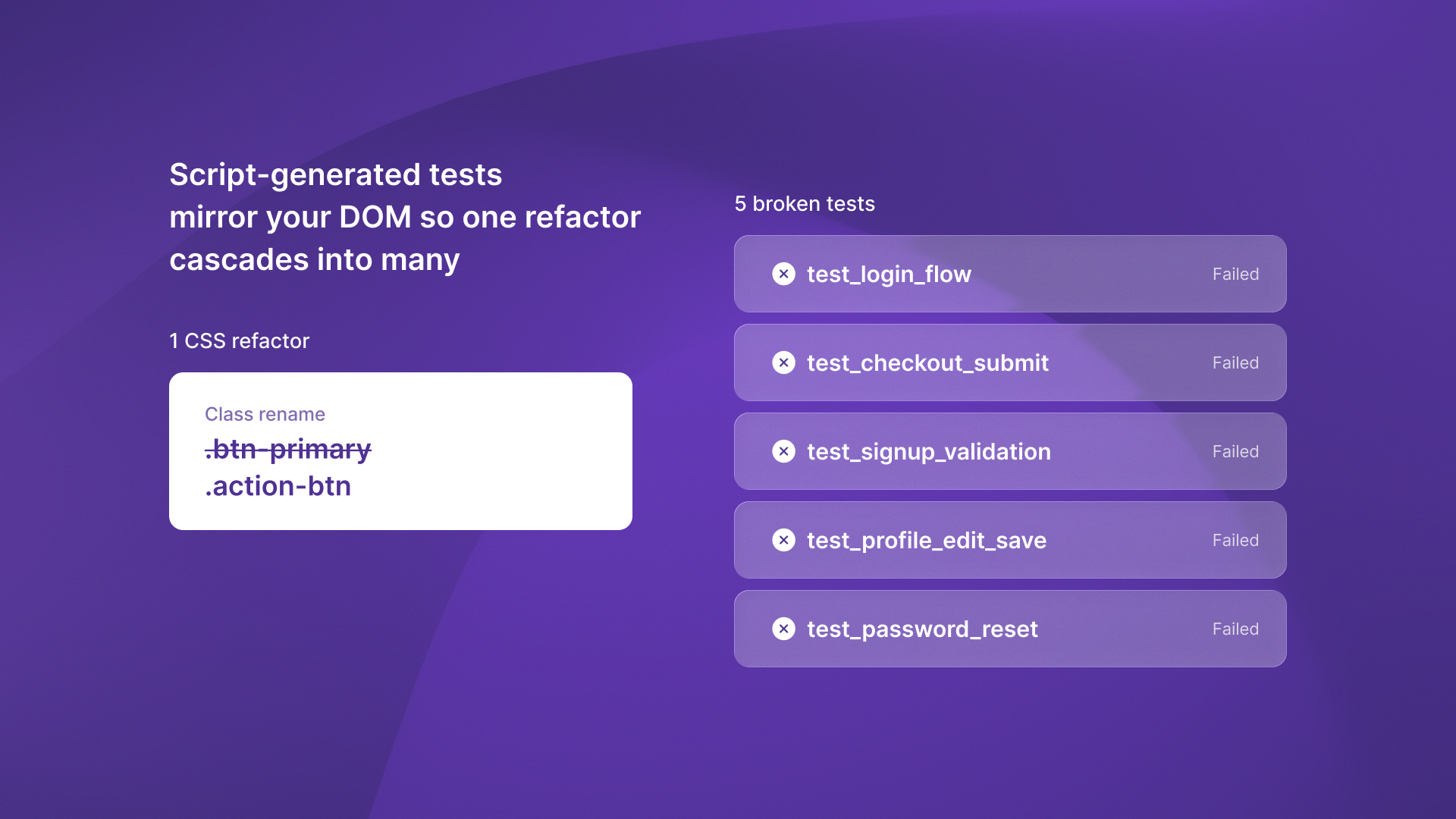

This is where autonomous testing vs automated testing becomes a critical distinction. Automated testing executes tests humans define. Autonomous testing generates tests directly from code changes.

Commit-triggered testing works differently. When a developer commits code, the system:

- Analyzes the commit message to understand intent

- Parses the git diff to identify changed functionality

- Generates targeted test cases for the specific changes

- Executes tests and reports results in the PR

No human defines the test cases. The system maintains test coverage at engineering velocity without requiring QA engineers to write every test definition.

I saw this firsthand at ReachSocial. They were maintaining 180 Cypress tests manually. Each frontend refactor broke 15-20 selectors. QA spent 2 days per sprint just fixing broken tests. When they shifted to commit-triggered autonomous test generation, test maintenance time dropped to near zero. The system generated tests from code intent, not brittle DOM selectors.

Teams using this architecture reduce QA cycle time from 2 weeks to 3 days. They maintain or improve bug detection rates. The constraint changes from QA throughput to engineering merge frequency.

Test automation headcount redeploys to high-value exploratory testing

When autonomous systems handle regression coverage, QA engineers stop spending 60-70% of their time maintaining test suites. They redeploy to exploratory testing and UX validation. This catches critical bugs that automated coverage misses.

The result: QA teams find 3x more critical bugs per hour. They're focused on high-judgment testing that requires human intuition, not mechanical test execution.

At ReachSocial, this shift let the QA team focus on edge cases and user flow validation. They caught integration issues autonomous regression testing couldn't detect. The QA headcount stayed flat while engineering doubled.

The pattern mirrors fractional hiring models in other domains. It removes low-value mechanical work. It shifts humans to high-judgment tasks. QA engineers become quality strategists, not test script maintainers.

The economic case for commit-triggered testing

Do the math on proportional QA hiring. A 200-engineer team needs 8-16 dedicated QA engineers at $120K-$150K fully loaded annually. That's $960K to $2.4M in direct QA costs.

Or you accept the 25% automation coverage ceiling that traditional platforms hit. Ship with 75% of your application untested by automation. Rely on manual QA for the rest.

The hidden cost isn't the salaries. It's the 3-6 month ramp time for each new QA hire. By the time they're productive, your engineering team has shipped another quarter of features. The coverage gap widens.

Commit-triggered test generation changes the equation. Test coverage grows automatically with code changes. QA teams maintain coverage at engineering velocity while focusing on the exploratory testing that actually catches critical bugs.

Small businesses using AI automation tools in other domains see similar patterns. They remove repetitive work. They scale operations without adding the same number of staff.

The constraint that doesn't scale

The QA scaling bottleneck isn't a team failure. It's the predictable outcome of an architecture that requires humans to define test cases faster than engineers write features.

That constraint doesn't scale.

GitHub test generation changes the architecture. Test coverage grows automatically with code changes. QA teams maintain coverage at engineering velocity while focusing on the exploratory testing that actually catches critical bugs.

The choice isn't between QA headcount and release velocity. It's between architectures that scale and architectures that don't.

Ready to scale your test coverage without scaling your QA team? Start with autonomous testing and maintain coverage at engineering velocity.

.svg)

.svg)