Why multi-agent testing systems aren't selenium with AI

Most AI testing tools are Selenium plugins with a chatbot.

They generate scripts. They don't generate tests. The market conflates two fundamentally different architectures: script generators that automate test code writing, and autonomous systems that generate tests from behavioral intent.

Both use AI. Only one eliminates the actual bottleneck in QA.

Script generators automate the wrong part

60% of teams prioritize automating regression testing to free manual testers for exploratory work. But script generation doesn't solve the maintenance burden. It doesn't eliminate human test case definition.

It just automates the writing of what humans already specified.

The workflow stays the same: humans choose what to test, AI writes the Selenium code, and humans update brittle selectors when the code changes. Faster execution. Same bottleneck.

The selector maintenance trap

Every CSS class change breaks your test suite. Every refactoring effort triggers cascading failures. Script generators produce implementation-coupled tests that mirror your DOM structure.

When the implementation changes, every selector must be manually updated. This is not autonomy. This is accelerated script templating.

Multi-agent systems use orchestration and state machines

Autonomous testing platforms like QA flow operate on a different architectural layer. They use orchestration systems, state machines, and confidence thresholds to decide how to generate tests. They base this on intent, not on screenshots of the current UI.

Self-healing test automation reduces maintenance time when implemented as autonomous systems that understand behavioral intent. Not when implemented as AI-assisted script writers that still require human-defined test cases.

How orchestration differs from script templates

Multi-agent systems read design specifications to understand what behavior should exist. Figma files. User stories. Commit messages. They generate tests that validate the intent, not the implementation.

When you refactor your CSS classes, the tests survive because they're coupled to behavior, not selectors.

State machines track test execution context and make autonomous decisions about coverage. Confidence thresholds determine when to flag edge cases versus when to proceed. This is not script generation with smarter autocomplete. This is architectural autonomy.

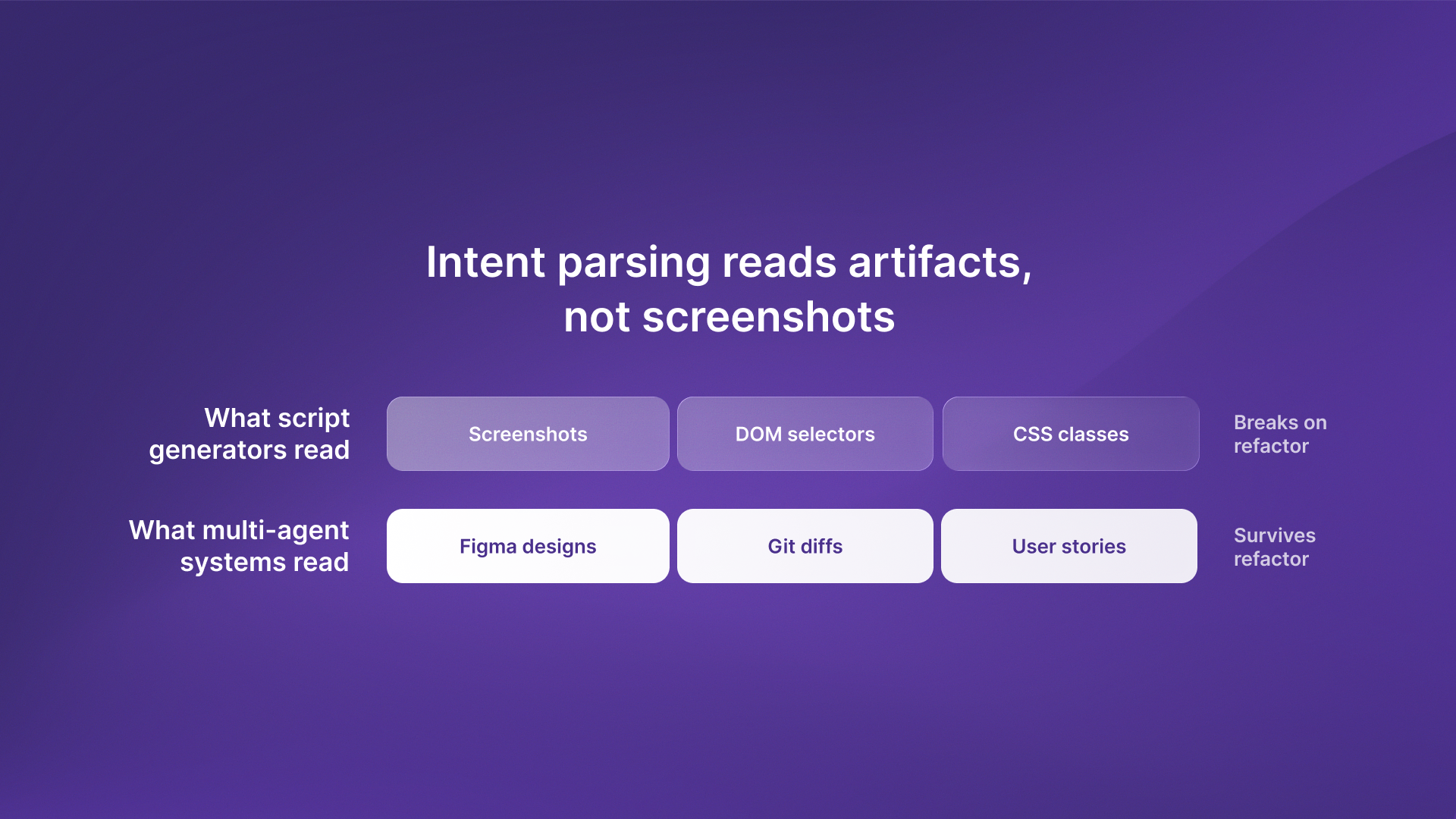

Intent-based testing reads artifacts, not screenshots

AI-first test automation analyzes historical test data to prioritize high-risk areas. This works only when the system understands intent from design artifacts. Figma designs. Git diffs. Not screenshots of the current page.

The difference: implementation-based scripts break on every CSS change. Intent-based tests survive refactoring because they validate behavior, not DOM structure.

What intent parsing actually means

When QA flow reads a Figma design, it extracts the intended user flows. What actions should be possible. What states should exist. What validations should trigger.

When developers refactor the frontend, the tests regenerate against the new implementation while preserving the behavioral assertions. Script generators can't do this. They're locked to the implementation you showed them during recording.

Test design techniques built on intent rather than implementation create coverage that survives codebase evolution.

The fundamental shift: generation vs execution

Autonomous testing generates tests from behavioral intent. Automated testing executes human-written test cases faster.

Both use AI. One replaces the bottleneck. The other accelerates a task that's already fast.

Tools like the QA flow audit show this difference. It analyzes website behavior on its own, without human-made test cases. It finds issues through intent-based checks, not by running prewritten scripts.

What this means for QA teams

If your AI testing tool requires humans to define test cases, you've automated execution. If your tool generates tests from design specs and survives refactoring, you've automated definition.

The second eliminates maintenance. The first just makes maintenance slightly faster.

The takeaway

AI testing tools that generate Selenium scripts automate the wrong part of QA.

The bottleneck isn't writing test code. It's defining what to test and maintaining tests through refactoring.

Autonomous systems eliminate both.

.svg)

.svg)