The QA bottleneck: why traditional approaches fail at scale

.png)

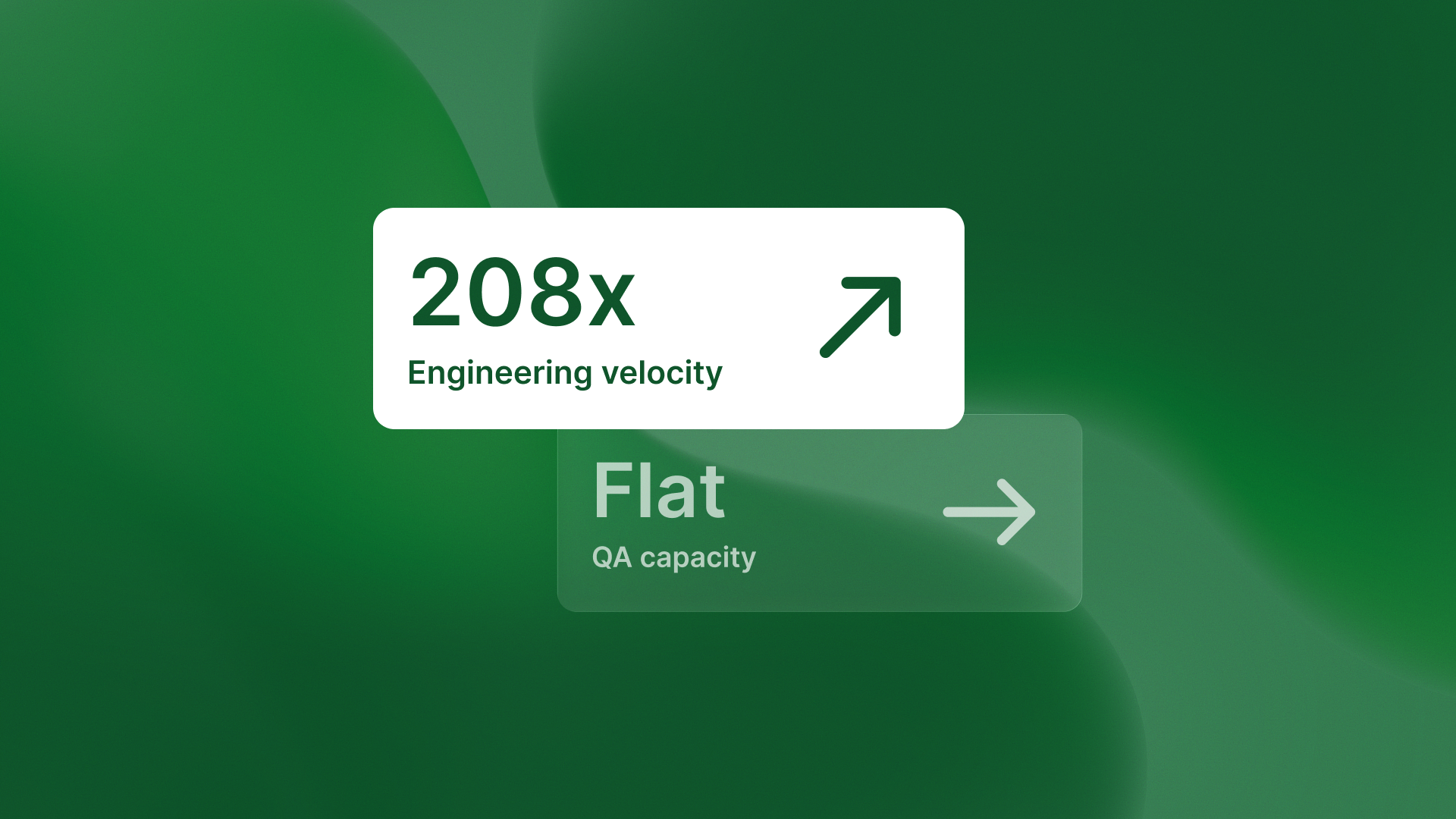

Growth-stage engineering teams face an impossible tradeoff. Scale QA proportionally and costs explode. Accept slower release cycles and competitors win. Implement test automation and watch it break on every refactor.

This isn't a hypothetical problem. The share of teams with large QA groups rose from 17% in 2023 to 30% in 2026. This reflects continued scaling challenges, even with automation investments. Engineering leaders have tried three traditional paths, and all three fail at scale.

Path 1: hire QA engineers proportionally

The first approach is simple math. As your engineering team grows from 10 to 100, you scale QA headcount proportionally. For every three engineers shipping features, you hire one QA engineer to test them.

This works until it doesn't. QA-to-engineer ratios climb toward 1:3, and costs balloon faster than revenue. You're burning cash on headcount while QA cycle time still stretches to two weeks. The math breaks before you hit Series C.

Path 2: accept slower release cycles

The second path is accepting reality. You can't hire QA fast enough, so you slow down releases. Two-week QA cycles become the bottleneck in your DevOps pipeline, and competitive velocity suffers.

Less than 20% of testing is automated in most organizations today. This creates a major bottleneck in DevOps pipelines. Test automation is critical in these pipelines.

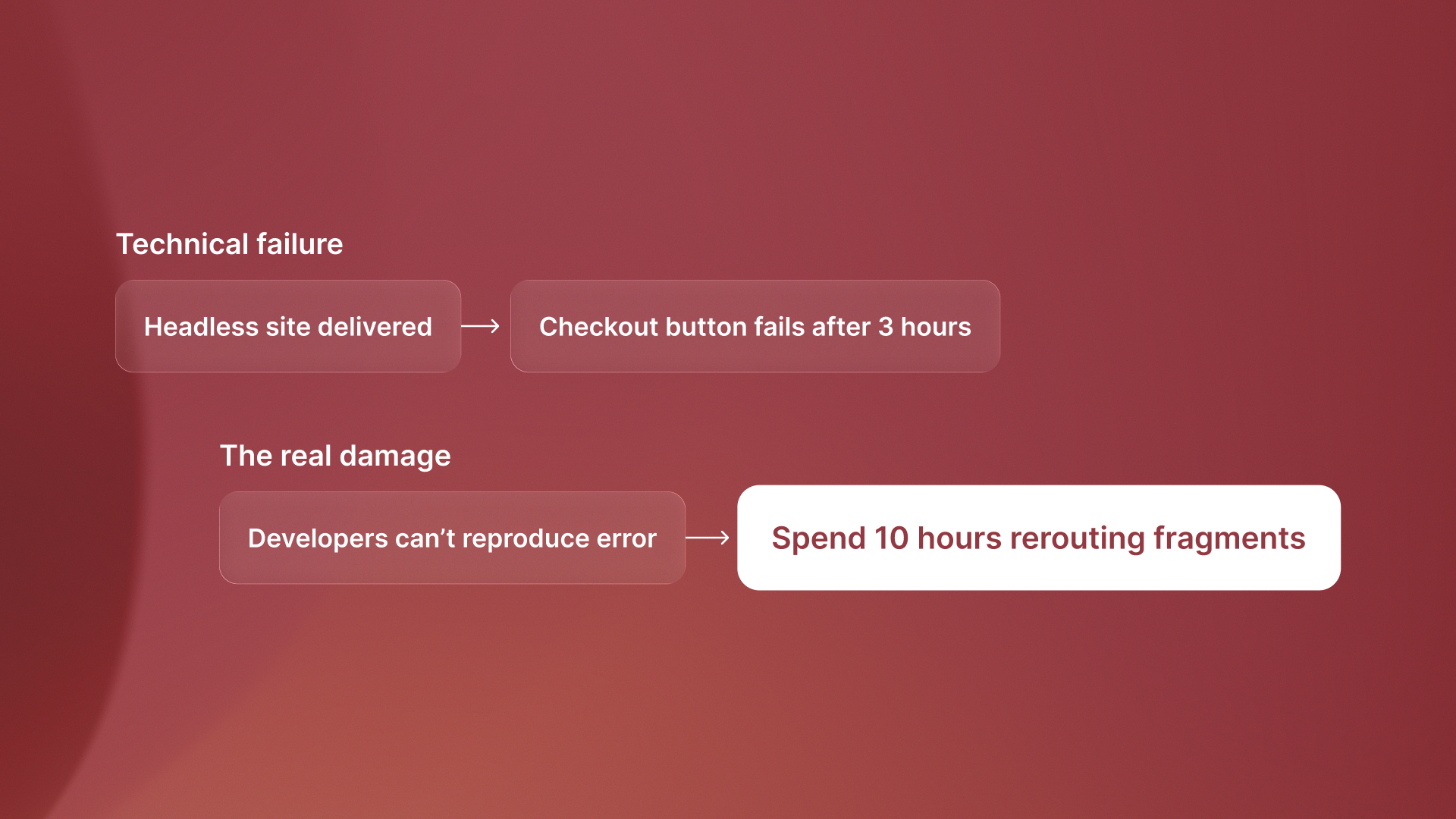

Path 3: implement test automation that breaks

The third path promises salvation: test automation frameworks like Selenium, Cypress, or Playwright. You invest months building test suites that execute human-written scripts faster than manual testing.

Then you refactor your UI, and every test breaks. CSS selectors change, and suddenly you're spending more time maintaining test scripts than writing them. The automation becomes brittle because it tests implementation details rather than behavior. Your QA team shifts from testing to test maintenance.

The fourth path: autonomous testing

89% of organizations are testing or rolling out generative AI-based QE workflows to solve QA scaling issues. Only 15% have reached enterprise-wide implementation. Most AI-powered testing tools just generate Selenium scripts faster, inheriting the same brittleness.

Autonomous testing differs fundamentally from automated testing. Tools like QA flow generate tests from design specs in Figma rather than executing human-written scripts. Intent-based tests test behavior, not CSS selectors, so they stay valid through code refactors while traditional automation breaks.

This eliminates the maintenance burden entirely. When you refactor your login flow, tests from the original design spec still work. They check that the user can sign in. They do not check for a button with the class

.png)

The takeaway

Automated testing executes human-defined test cases faster. Autonomous testing generates tests from design intent. The difference determines whether test automation scales or becomes another maintenance burden. The industry shift is already underway. The 89% adoption rate shows demand, but the 15% enterprise-scale gap reveals implementation challenges. Companies solving this aren't just moving faster. They're eliminating the tradeoff between speed, quality, and cost entirely.

Try QA flow if you're ready to move beyond brittle test automation.

.svg)

.svg)

.png)